This Case Study is a guest post written by Titouan Galopin, lead engineer and product lead at EnMarche project. Please note that this is a strictly technical article; any political comment will be automatically deleted.

Want your company featured on the official Symfony blog? Send a proposal or case study to fabien.potencier@sensiolabs.com

Project Background

In April 2016, Emmanuel Macron, now President of France, created a political movement called "En Marche!" ("On the Move" in English), initially as a door-to-door operation to ask the public what was wrong with France.

Unlike established political parties, En Marche! didn't have any infrastructure, budget or members to support its cause. That's why En Marche! relied on the power of Internet since its very beginning to find supporters, promote events and collect donations.

I started to work for En Marche! as a volunteer in October 2016. The team was small and all of the IT operations were maintained by just one person. So they gladly accepted my proposal to help them. At that time, the platform was created with WordPress, but we needed to replace it with something that allowed faster and more customized development. The choice of Symfony was natural: it fits the project size well, I have experience with it and it scales easily to handle the large number of users we have.

Architecture overview

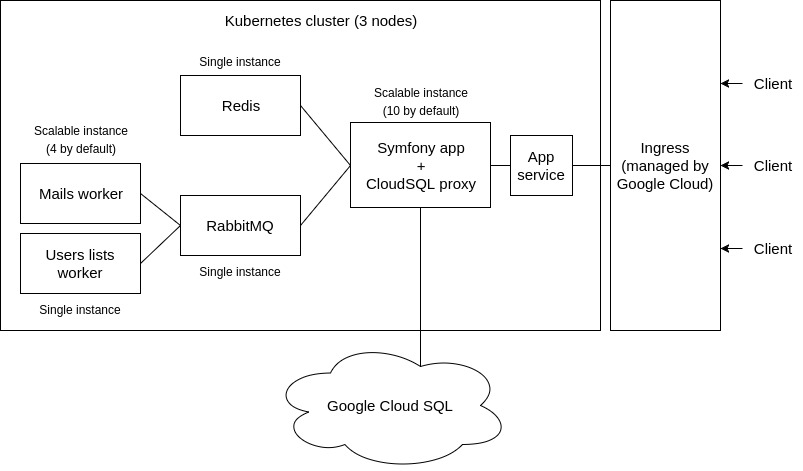

Scalability was the top priority of the project, especially after the issues they faced with the first version of the platform that wasn't built with Symfony. The following diagram shows an overview of the project architecture, which is extremely scalable and redundant where needed:

We use Google Container Engine and Kubernetes to provide scalability, rolling updates and load balancing.

The Symfony app is built from the ground as a Dockerized application. The configuration uses environment variables and the application is read-only to keep it scalable: we don't generate any files at run-time in the container. The application cache is generated when building the Docker image and then is synchronized amongst the servers using the Symfony Cache component combined with a Redis instance.

There are two workers, managed by RabbitMQ, to process some heavy operations in the background: sending emails (sometimes we have to send 45k emails in a single request) and building the serialized JSON users lists that are used by several parts of the application to avoid dealing with slow and complex SQL queries.

The database uses Google Cloud SQL, a centralized MySQL database we don’t have to manage. To connect to it, we use the Cloud SQL proxy Docker image.

Deployment

The project uses a Continuous Delivery strategy, which is different from the Continuous Deployment approach: each commit is automatically deployed on a staging server but the production deployment is manual. Google Container Engine and Kubernetes are the key components to our deployment flow.

The Continuous Delivery process, as well as the unit and functional tests, is handled by CircleCI. We also use StyleCI (to ensure that new code matches the coding style of the rest of the project) and SensioLabsInsight (to perform automatic code quality analyses). These three services are configured as checks that each Pull Request must pass before merging it.

When a Pull Request is merged, the Continuous Delivery process starts (see the configuration file):

- Authenticate on Google Cloud using a Circle CI environment variable.

- Build the Javascript files for production.

- Build the three Docker images of the project (app, mails worker, users lists worker).

- Push the built images to Google Container Registry.

- Use the

kubectlcommand line tool to update the staging server (a rolling update).

The only process performed manually (on purpose) is the SQL migration. Even if that can be automated, we prefer to carefully review those migrations before applying them to prevent serious errors on production.

Front-end

The application front-end doesn't follow the single-page application pattern. In fact, we wanted to use the least amount of Javascript possible to improve performance and rely on the native browser features.

React + Webpack

The JavaScript code of the application is implemented using React compiled with Webpack. We don't use Redux - or even React-Router - but pure React code, and we load the components only in specific containers on the page, instead of building the whole page with them. This is useful for two reasons:

- The HTML content is fully rendered before React is loaded, and then React modifies the page contents as needed. This makes the application usable without JavaScript, even when the page is still loading on slow networks. This technique is called "progressive enhancement" and it dramatically improves the perceived performance.

- We use Webpack 2 with tree shaking and chunks loading, so the components of each page are only loaded when necessary and therefore do not bloat the minified application code.

This technique lead us to organize the front-end code as following:

- A front/ directory at the root of the application stores all the SASS and JavaScript files.

- A tiny kernel.js file loads the JavaScript vendors and application code in parallel.

- An app.js file loads the different application components.

- In the Twig templates, we load the components needed for each page (for example, the address autocomplete component.

Front-end performance

Front-end performance is often overlooked, but the network is usually the biggest bottleneck of your application. Saving a few milliseconds in the backend won't take you too far, but saving 3 or more seconds of loading time for the images will change the perception of your web site.

Images were the main front-end performance issue. Campaign managers wanted to publish lots of images, but the users want fast-loading pages. The solution was to use powerful compression algorithms and apply other tricks.

First, we stored the image contents on Google Cloud Storage and their metadata in the database (using a Doctrine entity called Media). This allows us, for example, to know the image dimensions without needing to load it. This helps us creating a web page design that doesn't jump around while images load.

Second, we combined the Media entity date with the Glide library to implement:

- Contextual image resizing: for example, the images displayed on the small grid blocks in the homepage can be much smaller and of lower resolutions than the same images displayed as the main article image.

- Better image compression: all images are encoded as progressive jpegs with a quality of 70%. This change improved the loading time dramatically compared to other formats such as PNG.

The integration of Glide into Symfony was made with a simple endpoint in the AssetController and we used signatures and the cache to mitigate DDoS attacks on this endpoint.

Third, we lazy loaded all images below the scroll, which consists in three steps:

- Load all the elements above the scroll as fast as possible, and wait for the ones below it.

- Load ultra low resolution versions of the images below the scroll (generated with Glide) and use local JavaScript code to apply a gaussian blur filter to them.

- Replace these blurred placeholders when the high quality images are loaded.

We implemented an application wide Javascript listener to apply this behavior everywhere on the web site.

Forms

The project includes some interesting forms. The first one is the form to sign up for the web: depending upon the country and postal code fields, the city field changes from an input text to a prepopulated select list.

Technically there are two fields: “cityName” and “city” (the second one is the code assigned to the city according to the French regulations). The Form component populates these two fields from the request, as usual.

On the view side, only the cityName field is displayed initially. If the selected country is France, we use some JavaScript code to show the select list of cities. This JavaScript code also listens to the change event of the postal code field and makes an AJAX request to get the list of related cities. On the server side, if the selected country is France, we require a city code to be provided and otherwise we use the cityName field.

This technique is a good example of the progressive enhancement technique discussed a bit earlier in this article. The JavaScript code, as everything else, is just a helper to make some things nicer, but it's not critical to make the feature work.

As these of address fields are used a lot in the application, we abstracted it to an AddressType form type associated with an address Javascript component.

The other interesting form is the one that lets you send an email to someone trying to convince them to vote for the candidate. It's a multi-step form that asks some questions about that other person (gender, age, job type, topics of interest, etc.) and then generates customized content that can be sent by email.

Technically the form combines a highly dynamic Symfony Form with the Workflow component, which is a good example of how to integrate both. The implementation is based on a model class called InvitationProcessor populated from a multi-step and dynamic form type and storing the contents in the session. The Workflow component was used to ensure that the model object is valid, defining which transitions were allowed for each model state: see InvitationProcessorHandler and workflows.yml config.

Search Engine

The search engine, which is blazing fast and provides real-time search results, is powered by Algolia. The integration to index the application entities (articles, pages, committees, events, etc.) is made with the AlgoliaSearchBundle.

This bundle is really useful. We just added a few annotations to the Doctrine entities and after that, the search index was automatically updated whenever an entity is created, updated or deleted. Technically, the bundle listens to the Doctrine events, so you don't need to do anything to keep the search contents always updated.

Security

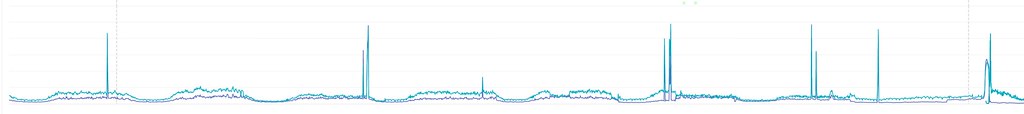

As any other high-profile web site, we were the target of some attacks coordinated and carried out by powerful organizations. Most of the attacks were of brute-force nature and the aim was to take the web site down rather than infiltrate it.

The web site was targeted by DDoS attacks eight times in the whole campaign, five of them in the final two weeks. They had no impact on the Symfony app because of the Cloudflare mitigation and our on-demand scalability based on Kubernetes.

First, we suffered three attacks based on WordPress pingbacks. The attackers used thousands of hacked WordPress websites to send pingback requests to our website, quickly overloading it. We added some checks in the nginx configuration to mitigate this attack.

The other attacks were more sophisticated and required both Cloudflare and Varnish to mitigate them. Using Cloudfare to cache assets was so efficient that we thought there was no need for a reverse proxy. However, a reverse proxy was proven necessary during DDoS attacks: in the last days of the campaign, the attacks were huge (up to 300,000 requests per second) and we had to disable the user system and enable the "Cache Everything" flag on Cloudfare.

There's nothing you can do to prevent security attacks, but you can mitigate them by complying with the best practices of Symfony, which, by the way, is one of the few open source projects that has conducted a public security audit.

Open Source

The en-marche.fr web platform and its related projects have been open sourced in the @EnMarche GitHub account. We didn't promote this idea much though, because open source is pretty complex to explain to non-technical people. However, we received some contributions from people that found the project and were glad that it was open source.

We are also thinking about giving back to Symfony by contributing some elements developed for the project. For example, the UnitedNationsCountryType form type could be useful for some projects. We also developed an integration with the Mailjet service that could be released as a Symfony bundle.

What I'm missing in the introduction is what this project is about exactly, it is a CMS? And what were the technical requirements (what must users be able to do with it, etc.).

Good to see this open-source and definitely a case study!

@Sebastiaan we didn't explain in detail, but it's a web platform for a political party, so you can expect: articles/news of the candidate, forms to collect donations, forms to sign up for the party, a lot of multimedia contents (videos, photos of political rallies), etc. So it's not only a CMS but a more general-purpose platform.

@Sebastiaan There are two main parts in the application :

Thanks for the explanation 👍

Thanks for sharing this story. You made my day. For all of us that work on Open Source this is incredible. We have no idea of the power we can have when writing a few lines of code or documentation.

Without thinking about it, by working in Symfony we helped change the course of a whole country, and in this particular case of Europe too. This is incredible!

Great explanation, Maybe is possible to make this in my country. All project open source is perfect for me is incredible !!

Regards

Very educative use case, nice!

This is really interesting; thank you for sharing.

Some of the links in the post will rot in the future, but can be replaced with ones that will remain accessible for as long as GitHub lives. For example, the link to the Wordpress pingback check in the Nginx config:-

bad: https://github.com/EnMarche/en-marche.fr/blob/master/docker/prod/nginx.conf#L59

good: https://github.com/EnMarche/en-marche.fr/blob/d555956d648db8a70ed5a259fe192cd0b95704db/docker/prod/nginx.conf#L59

Excellent case study with very interesting technical examples, thanks for sharing !

How do you manage and deliver assets in this way? I mean, you have multiple app instances, each instance could write an asset (lets say, an image). How do you manage where to serve this image from? Do you have the images embedded in the source code, they are distributed among every instance / the cluster or you just use a CDN?

Very nice! Beautiful work

When you say: "we don't generate any files at run-time".. What about the the images generated by glide ? Do you warm-up all of them on deploy within their sizes? Assetic has the assetic:dump command..

This is the best case study i have ever read. Be sure to update it with the good questions/answers from the comments here.

Now i know i want to replicate your setup in the weekend.

Thank you so much!

Great :D . thanks for sharing !

@Demostenes Garcia That's a great question. We use Flysystem (https://flysystem.thephpleague.com) with https://github.com/Superbalist/flysystem-google-cloud-storage to upload assets on Google Cloud Storage, and we use it as a backend for Glide. The combination of Glide with Flysystem is the key here, as it allows us to quickly setup upload of the Media entites (https://github.com/EnMarche/en-marche.fr/blob/2e42d5e56149b91cc78f8d171938035217cefefb/src/AppBundle/Admin/MediaAdmin.php#L56).

We actually don't have any CDN: we rely solely on Cloudfare to act as a CDN with HTTP cache and it works really well. In addition to this, Glide have cache in Redis (https://github.com/EnMarche/en-marche.fr/blob/2e42d5e56149b91cc78f8d171938035217cefefb/app/config/services/cms.xml#L73).

@Pedro Casado As explained right above, we store images on Google Cloud Storage and we use Glide to resize images and store the resized versions in Redis.

@Laurynas Mališauskas @ahmedsfny @Tomáš Votruba @Quentin Fahrner thanks for the feedback!

Thanks for sharing. And for your answers.

Thanks for sharing this, it was very interesting to hear that Symfony was behind this campaign.

Great work

Hello, could you tell us more about:

"The application cache is generated when building the Docker image and then is synchronized amongst the servers using the Symfony Cache component combined with a Redis instance."

Where is the part with synchronizing it? Also cache directory structure could change when application is run, so there your containers are really "read-only" as there will be at least two things written to containers:

I suppose you don't use logs as you log everything to sentry, so you ignore log files there. But more important is - where is this cache central stored and how is it excactly synchronized between servers?

Hello @Pawel F,

In Symfony, there are two types of cache: app cache and system cache:

The notion of app/system cache is native in Symfony, so we only had to configure the app cache (the system cache is automatically configured to use the filesystem): https://github.com/EnMarche/en-marche.fr/blob/master/app/config/config.yml#L47.

To connect the app cache to Redis, we use https://github.com/snc/SncRedisBundle and we inject is as a session handler and a Doctrine cache provider into Symfony.

Hello @Titouan,

During the DDos attacks, did you use the cluster auto scaler (https://cloud.google.com/container-engine/docs/cluster-autoscaler)?

Thanks!

@Guillaume Charhon Strangely enough, we didn't. Cloudfare has pretty powerful algorithms that usually avoid having to scale on our end (it starts blocking automated visits when too much traffic come through). The only time auto-scaling would have been useful was on the first round night, when we learned we were qualified for the second round: there was a huge traffic done by real humans, so we had to work on handling it manually. With auto-scaling we may have handled it automatically. However, as it would have been really useful only once or twice in the campaign, I think we would have spent too much time on configuring it instead on focusing on actual features, relatively to the advantages it would have brang.

Hi @Titouan,

Are the workers managed by RabbitMQ available at github? i can't seem to find them here https://github.com/EnMarche/worker.

Thanks